AI- Assisted File Upload

For Cleaner, More Complex Data

The Project

The Problem: Users importing large Excel inventories only discovered errors after upload, leading to hours of manual cleanup, low feature adoption, and distrust in the platform

CyberOwl is a Cyber Security company headquartered in the UK, offering cyber risk monitoring and resolution for the maritime industry globally.

I led UX for a new file upload feature that helps users import large Excel-based inventories into the platform. The design focused on catching errors early and using AI to offer helpful, contextual suggestions that reduce manual effort.

For: CyberOwl

My Role: Product Designer

Tools: FigmaFOCUS AREAS:

UI Design

AI/ML Integration

User Flow Design

Data Validation UX

Usability Testing

Results

838 AI suggestions accepted by users in the first 3 months, proving the recommendations were helpful and accurate

Feature adoption increased by 25% as users gained confidence in uploading large inventories without fear of errors

Reduced manual onboarding time for enterprise clients by eliminating back-and-forth data cleanup between users and Customer Success

The challenge:

Maritime organizations maintain OT asset inventories in Excel—often hundreds of devices with 15+ data fields each. Uploading this data into CyberOwl was error-prone: users only discovered problems after upload, error messages were technical and unclear, and duplicate/missing data meant hours of manual cleanup. This led to low feature adoption and distrust in the platform.

My role:

I led the project end-to-end, from initial user research to final interaction design. I worked closely with developers, the AI engineering lead, and our PM to ensure technical feasibility and smooth integration into the platform’s existing inventory workflows.

The research:

I conducted user interviews (6 participants) to understand current workflows and pain points:

Identified Excel as the primary tool used to build and maintain inventories

Mapped out common issues with upload attempts — including naming mismatches, missing required fields, and unfamiliar terminology

I then worked with ML engineers to define what the AI should suggest vs. what users should control. I designed and tested a multi-step validation flow that surfaced errors early and offered intelligent, transparent recommendations.

The solution:

I designed an early validation system that flags errors before processing (missing fields, duplicates, format mismatches) and an AI-powered recommendation layer that suggests fixes—like renaming duplicates based on zone data or inferring manufacturers from MAC addresses—with transparent explanations for each suggestion. Users stay in control with accept/reject options for every AI recommendation.

The research

I started with user interviews to understand how people currently manage and upload asset data.

Discovery interviews with 6 IT managers and fleet operators:

How do you currently maintain your OT inventory?

What happens when you try to upload it into security tools?

What makes a "good" vs. "bad" upload experience?

Key findings:

Users maintain Excel as their source of truth and expect platforms to adapt to their format, not the other way around

Most errors stem from naming inconsistencies ("Router-1" vs. "Router 1") and missing required fields that users didn't know were required

Users wanted to fix errors during upload, not after — "Show me what's wrong before I commit"

Trust in AI was conditional — "I'll use it if I can see why it's suggesting something"

Defining the AI's Role

I worked closely with the engineering team to define what the AI should do vs. what the user should control.

This wasn't just a design challenge—it was a strategic decision about where automation helps vs. where it undermines trust.

AI should:

Detect errors early (duplicates, missing fields, format mismatches)

Suggest likely fixes when confidence is high (>85% accuracy)

Infer missing data from patterns (e.g., manufacturer from MAC address)

Provide transparent reasoning ("Based on MAC address prefix")

AI should NOT:

Auto-fix without user approval (breaks trust in cybersecurity context)

Make high-stakes decisions silently (e.g., changing asset criticality)

Suggest when confidence is low (creates more confusion than value)

The principle: AI as assistant, not autopilot. Users stay in control, but with intelligent support.

The Solution

I designed a four-step upload flow that balanced automation with user control:

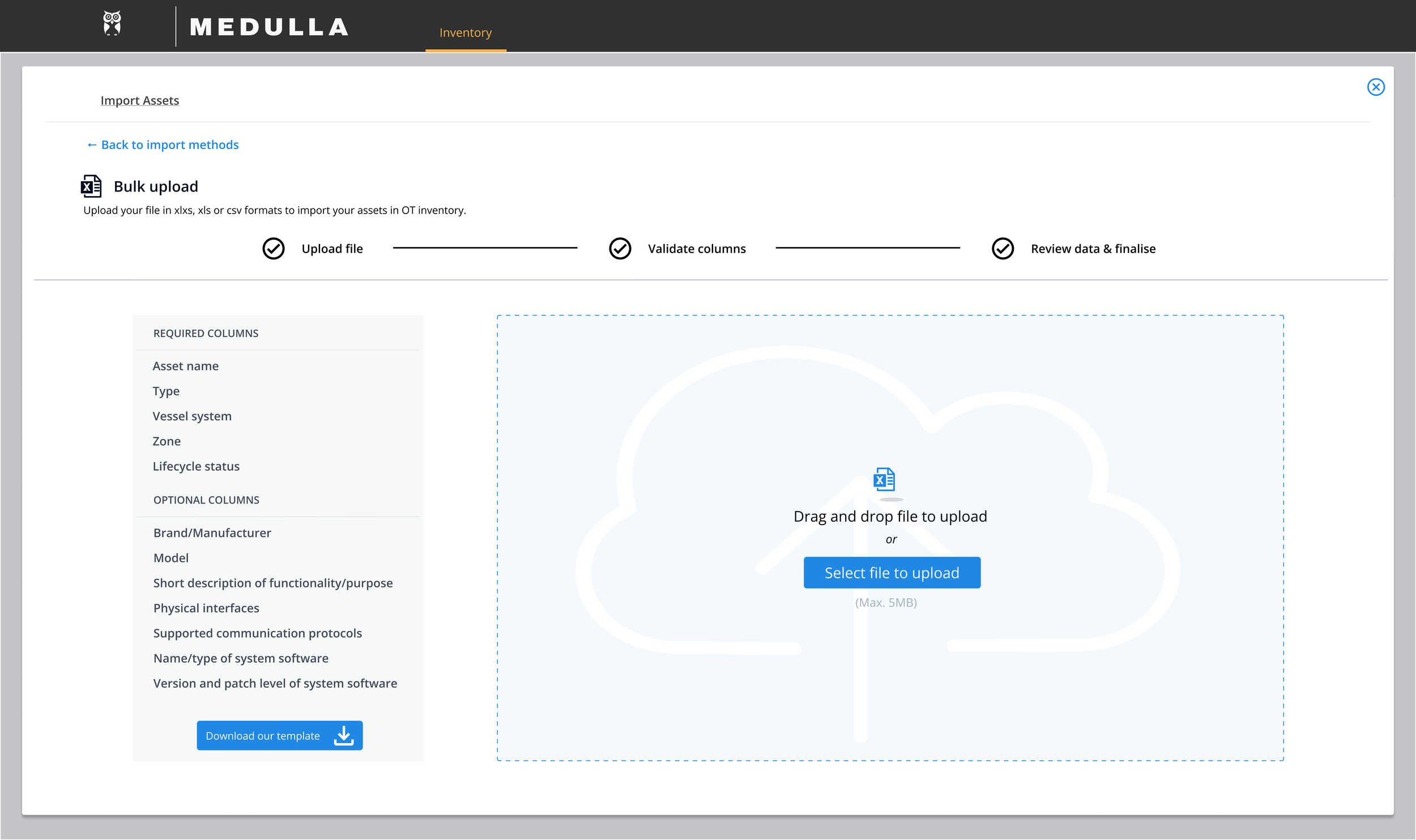

Step 1: Upload File

Users upload their Excel file or download a pre-formatted template to ensure column structure matches CyberOwl's requirements from the start.

Design decision: Offering a template upfront reduces errors before they happen. Users who maintain their own Excel formats can still upload, but the template option gives them a "known good" starting point.

What happens:

User selects their file

System performs initial scan to detect file structure

Template download option prominently displayed for users who want to avoid column mapping issues

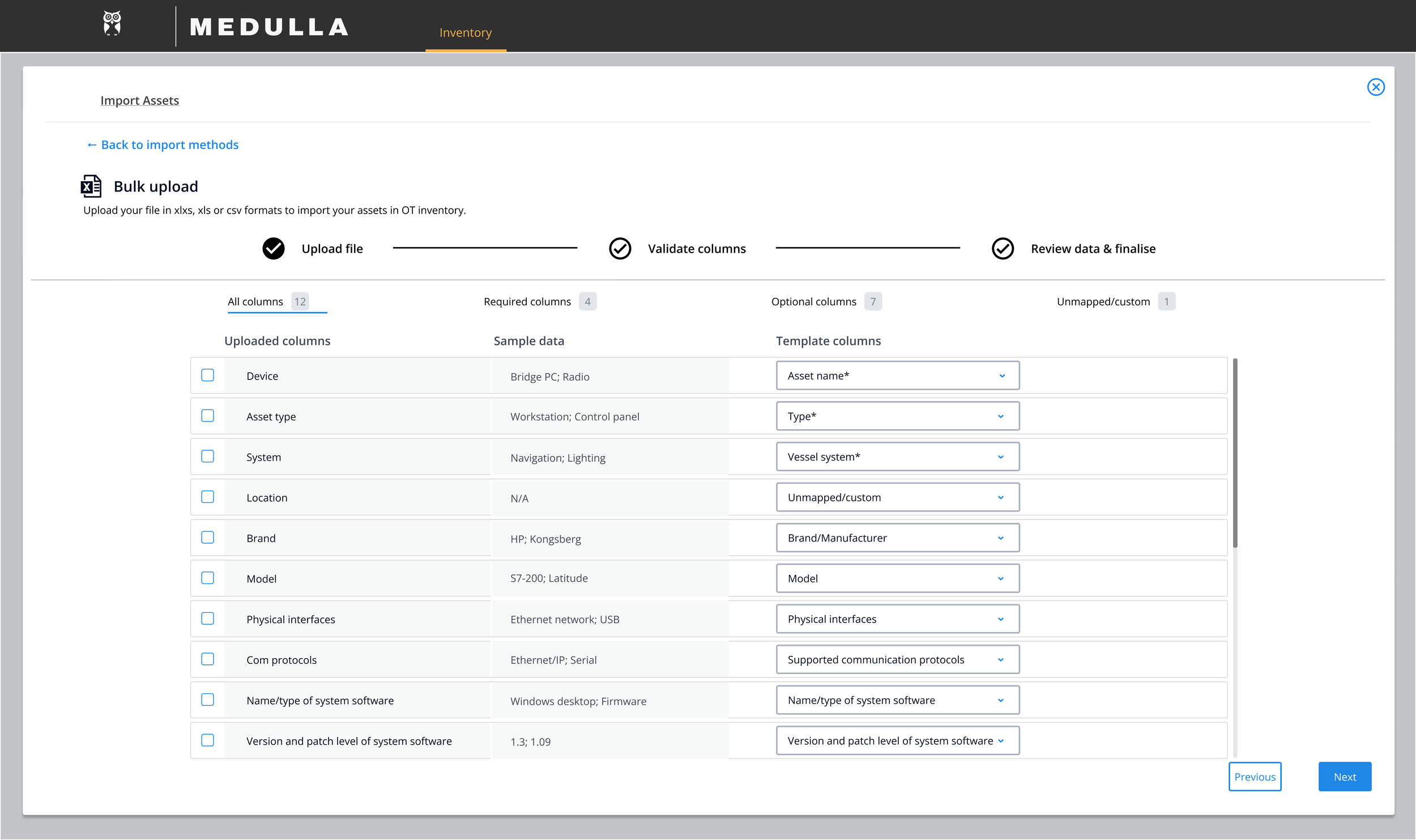

Step 2: Validate Columns

Since many users maintain their own Excel templates with custom column names, this step lets them map their columns to CyberOwl's recognized fields.

Example: User has "Device Name" → Map to CyberOwl's "Asset Name"

Example: User has "MAC" → Map to CyberOwl's "MAC Address"

Design decision: Rather than forcing users to rename their Excel columns, we adapt to their format. This respects their existing workflows while ensuring data maps correctly to our system.

What happens:

System auto-detects likely column matches based on header names

Users review and confirm mappings

Mismatched or unrecognized columns are flagged for manual mapping

Users can save column mappings for future uploads (reducing repeat work)

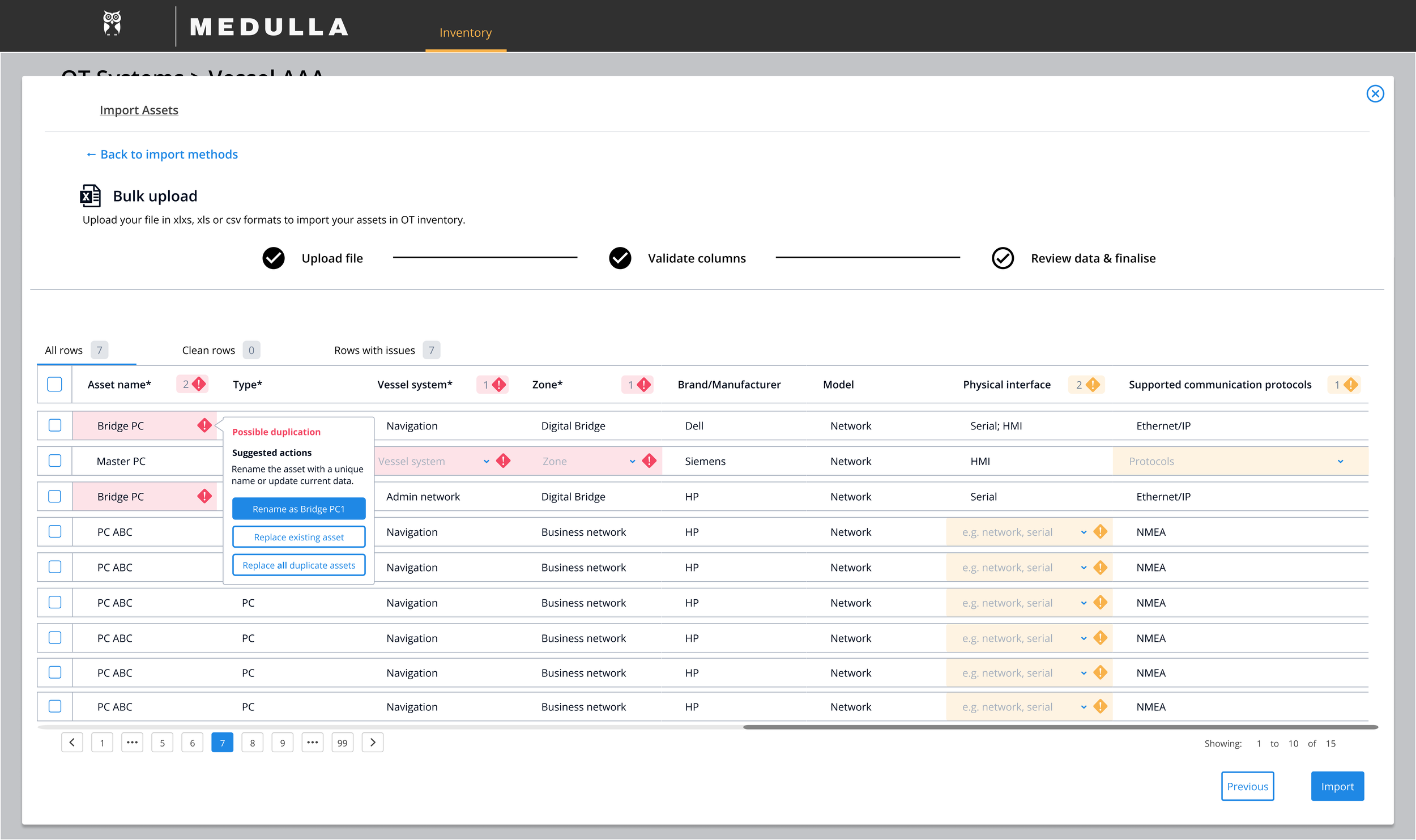

Step 3: Review & Approve (AI-Powered Validation)

This is where AI comes into play. Once columns are mapped, the system validates the actual data and offers intelligent suggestions to fix errors.

The AI detects and suggests fixes for:

Duplicate asset names:

AI suggests renames based on zone data or existing patterns

Example: "Router" appears 5 times → Suggests "Router-Bridge", "Router-Engine Room" based on location fields

Missing required fields:

AI flags missing data and, where possible, infers values

Example: Missing manufacturer → AI suggests "Cisco" based on MAC address prefix (with confidence indicator)

Format inconsistencies:

AI suggests standardized values to match existing fleet data

Example: User enters "Nav system" → AI suggests "Navigation System" to maintain consistency

Data quality issues:

Flags potential problems like duplicate MAC addresses or unusual criticality assignments

User options for each row:

Create new asset (if it doesn't exist in the system yet)

Replace existing asset (update an existing entry with new data)

Skip/fix (if there are blocking errors)

How suggestions work:

Each AI recommendation appears inline with the problematic row/field

Users see why the AI is suggesting it ("Based on MAC address prefix" or "Matches 3 existing assets")

Users can accept, reject, or manually edit each suggestion

Bulk actions available for users who trust the recommendations

Design decision: AI suggestions are opt-in, not automatic. In cybersecurity contexts, users need to feel in control of their data. Transparency (showing reasoning) builds trust; auto-fixes would undermine it.

Final review:

Users see a summary of all changes (AI suggestions accepted, manual edits, flagged errors)

Once all critical errors are resolved, users confirm the import

System provides upload summary: X assets imported, Y AI suggestions used, Z manual edits made

The design

Step 1 - File Upload:

Users can upload their existing Excel file or download CyberOwl's template to ensure proper formatting from the start.

Step 2 - Column Validation:

Users map their custom column names to CyberOwl's recognized fields. Auto-detection suggests likely matches, reducing manual mapping work.

Step 3 - Data Review (AI-Powered):

Errors flagged inline with AI suggestions. Clicking into a row shows what's wrong and what the AI recommends, with clear reasoning for each suggestion.

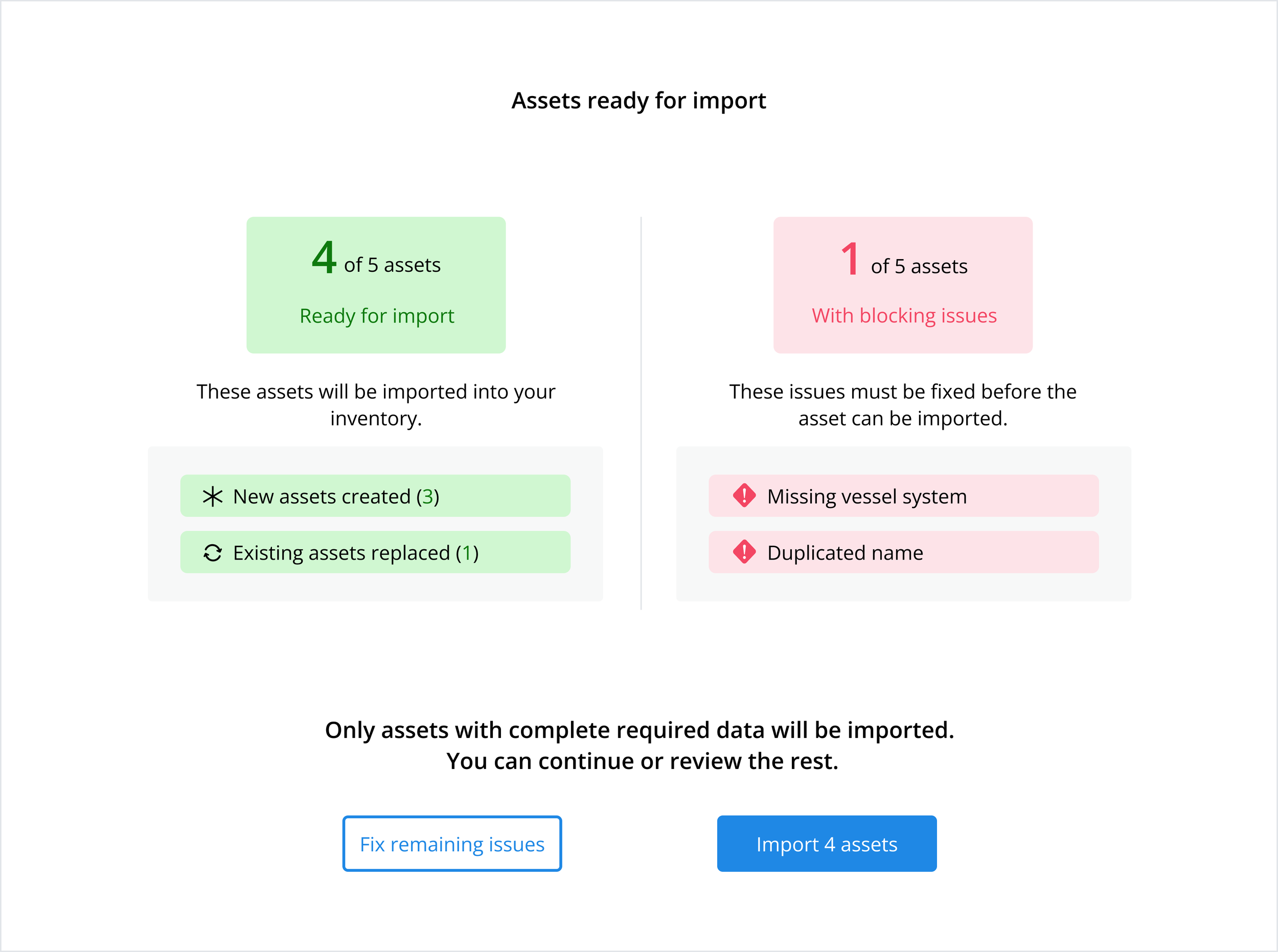

Final Confirmation:

Summary modal shows count of assets ready to import (new vs. replaced), assets with blocking errors, and clear action paths: fix errors or proceed with partial import.

Reflection & What I Learned

This project reinforced a principle that now shapes how I approach AI-powered features: the UX challenge isn't making AI work—it's helping users trust it.

The most valuable work happened in workshops with the Dev team, defining what the AI should suggest and at what confidence threshold. Questions like "Should we auto-fill or suggest?" or "How do we explain why the AI is recommending something?" were design decisions as much as engineering ones. I learned that designing AI features means designing the intelligence layer itself, not just the interface around it.

What changed after launch:

Customer Success reported fewer "data cleanup" support tickets from enterprise clients

The validation UI components (error states, suggestion patterns) were added to the design system for reuse

The project demonstrated the value of AI assistance in CyberOwl's product, opening conversations about where else intelligent suggestions could reduce manual work

What I'd do differently:

I would have tested saved column mapping presets earlier. Some users uploaded monthly fleet updates and had to remap columns each time until we added the "save mapping" feature post-launch. Testing this in the prototype phase would have caught that workflow need sooner.

The principle I carry forward:

When designing AI-powered features, transparency (showing why) and control (letting users override) are non-negotiable in high-stakes domains like cybersecurity. AI should feel like a helpful colleague who explains their reasoning, not a black box that dictates answers. Users will trust intelligent systems when they understand them—not when they're told to.